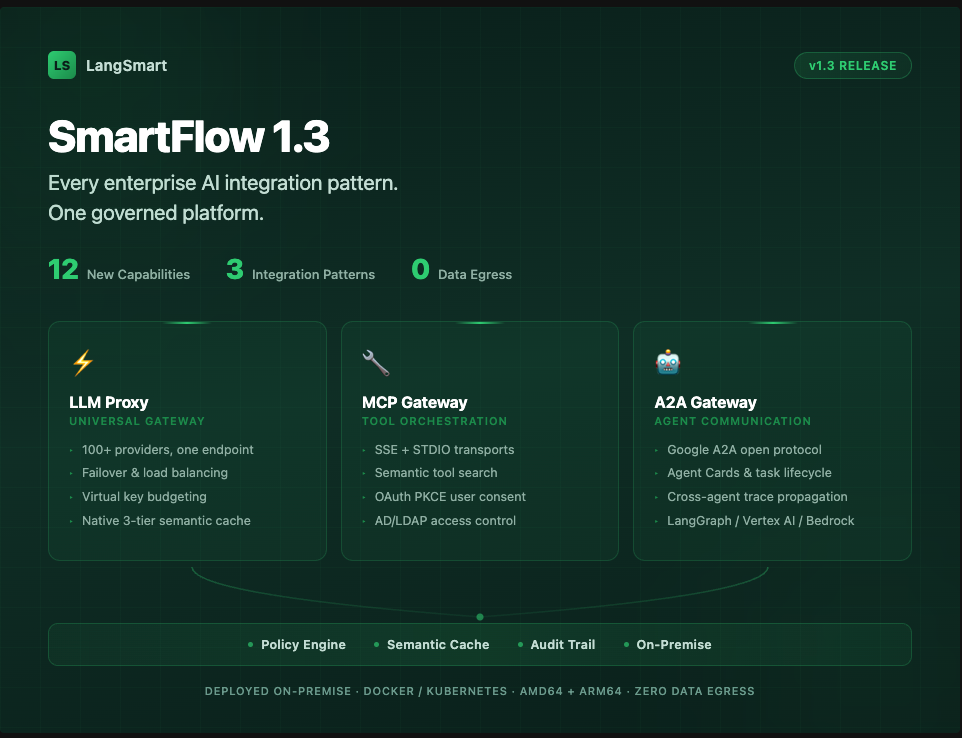

SmartFlow 1.3: Every Enterprise AI Integration Pattern, One Governed Platform

Today we’re shipping SmartFlow 1.3 with 12 new capabilities across caching, MCP tool orchestration, agent-to-agent communication, and enterprise security. This release represents a significant expansion of what SmartFlow governs: with 1.3, the platform now covers every way enterprises interact with AI.

When we built SmartFlow, the problem was straightforward—enterprises had dozens of applications making LLM API calls with no centralized visibility, compliance, or cost control. That remains the core use case. But the landscape has evolved. Organizations are now deploying MCP tool servers to give agents access to internal systems, building agent-to-agent workflows where AI systems delegate tasks to each other, and connecting these systems to an expanding ecosystem of external tools and services.

Each of these integration patterns creates new governance surface area. SmartFlow 1.3 extends our control plane to cover all three—with a consistent policy, caching, and audit layer across every interaction.

MCP Gateway: Complete Tool Orchestration

The Model Context Protocol (MCP) is rapidly becoming the standard for connecting AI agents to external tools and data sources. SmartFlow 1.3 delivers the most complete enterprise MCP implementation available, adding five new capabilities to our existing MCP server registry.

SSE and STDIO Transport Support

SmartFlow now supports all three MCP transport protocols. Server-Sent Events (SSE) enables real-time streaming from MCP servers that push data over persistent connections—useful for live monitoring, event-driven integrations, and real-time data feeds. STDIO transport means every standard community MCP server—GitHub CLI, filesystem access, local databases, shell tools—can now be registered and governed through SmartFlow. This was the single largest gap in enterprise MCP coverage, and it’s now closed.

Semantic Tool Search

As organizations register more MCP servers, finding the right tool becomes its own challenge. SmartFlow 1.3 indexes every tool across every registered server with embeddings of the tool’s name, description, and parameter names. Users and agents can search in plain language—“read a file,” “send a Slack message,” “query the database”—and get back a ranked list of matching tools and the servers that host them. This enables intent-driven tool routing without hard-coded server knowledge.

OAuth PKCE for User Consent

Many enterprise MCP tools require individual user authorization—think GitHub, Google Workspace, or Slack, where access is scoped to a specific user’s identity and permissions. SmartFlow 1.3 implements the full OAuth 2.0 PKCE flow for these scenarios. Users are redirected to the provider’s consent screen, authorize access with their own identity, and SmartFlow stores the resulting token scoped to that user and server pair. Sessions expire after 10 minutes if not completed, and tokens are managed independently per user.

Per-Server Credential Forwarding

Not all credentials should be stored centrally. SmartFlow 1.3 introduces per-server auth header forwarding, where clients pass server-specific credentials using scoped headers (x-mcp-{alias}-{header}). SmartFlow extracts and forwards them only to the intended server, then strips them before forwarding to the LLM. No credential leakage between servers. No central secret store required for ephemeral or user-specific tokens.

Server Aliases

MCP servers can now be assigned short, human-readable aliases independent of their URL or server ID. All routing, access control, and header forwarding resolves through the alias, which means server configurations can change without breaking integrations. Teams use names like “github” and “internal-search” instead of raw server identifiers.

A2A Agent Gateway: Governed Agent-to-Agent Communication

SmartFlow 1.3 implements the Google A2A (Agent-to-Agent) open protocol, making the platform interoperable with any A2A-compatible agent runtime. This is a significant expansion—SmartFlow can now govern not just how applications talk to LLMs, but how AI agents talk to each other.

Agents are registered as named profiles in Redis, each with a model, system prompt, optional MCP tool access, and a machine-readable Agent Card that advertises capabilities to other agents. The full task lifecycle is supported: submitted, working, completed, failed, and canceled, with every task persisted in Redis with complete message history and artifact outputs.

Cross-agent tracing propagates a shared trace ID (X-A2A-Trace-Id) through the entire agent call chain, enabling post-hoc correlation of multi-agent workflows for debugging and audit.

Compatible out of the box with LangGraph, Vertex AI, Azure AI Foundry, Bedrock AgentCore, and Pydantic AI.

Intelligent Caching: More Control, More Coverage

SmartFlow’s 3-tier semantic cache—L1 in-process memory, L2 Redis with embedding similarity, L3 Redis exact match—is entirely native to the platform with no external vector database required. Version 1.3 adds three capabilities:

• Per-request cache controls: Applications can bypass cache read (no-cache), bypass cache write (no-store), set custom TTL, or scope to a namespace on individual requests. This means applications that mix cacheable and non-cacheable calls—deterministic queries alongside real-time lookups—can handle both in the same integration.

• Cache key response headers: Every cached response now includes an x-smartflow-cache-key header, enabling client-side invalidation, cache debugging, and cost attribution.

• MCP tool-call caching: Identical tool calls with identical parameters return instantly without re-invoking the MCP server. Per-server and per-tool hit statistics are visible in the dashboard.

Routing & Cost Control

Virtual Key Budget Enforcement

SmartFlow-issued virtual keys (sk-sf-...) now carry hard spend limits with automatic period resets (daily, weekly, monthly, or lifetime). The critical detail: budgets are checked before every request. If the budget is exceeded, the request returns 429 before any cost is incurred. Spend is recorded after each response using actual token cost. This gives finance teams and platform administrators precise, enforceable cost control per user, per team, or per application.

Load Balancing with Fallback and Retry Chains

Named fallback chains define ordered lists of provider targets stored in Redis. When a target fails, SmartFlow applies intelligent retry logic: retryable errors (429 rate limits, 5xx server errors) get exponential backoff; non-retryable errors (4xx client errors) bypass retry and move immediately to the next target. The result is high-availability LLM routing with automatic handling of rate limits and provider outages—no application-level changes required.

Full Release Summary

|

# |

Feature |

Area |

|

1 |

MCP SSE Transport |

MCP Gateway |

|

2 |

MCP STDIO Transport |

MCP Gateway |

|

3 |

Per-Request Cache Controls |

Caching |

|

4 |

Cache Key in Response

Header |

Caching |

|

5 |

MCP Server Aliases |

MCP Gateway |

|

6 |

OAuth Client Credentials

for MCP |

MCP Auth |

|

7 |

Per-Server Auth Header Forwarding |

MCP Auth |

|

8 |

Virtual Key Budget

Enforcement |

Key Management |

|

9 |

Load Balancing with Fallback & Retry Chains |

Routing |

|

10 |

Semantic Tool Search |

MCP Gateway |

|

11 |

A2A Agent Gateway |

Agent Orchestration |

|

12 |

OAuth PKCE Browser Consent

for MCP |

MCP Auth |

What This Means

SmartFlow 1.3 reflects our belief that enterprise AI governance cannot be limited to LLM API calls. As organizations adopt MCP for tool integration and A2A for agent orchestration, the governance surface area expands dramatically. Every tool call, every agent-to-agent handoff, every cached response, and every budget decision needs the same level of visibility, policy enforcement, and auditability that enterprises expect from their network and identity infrastructure.

SmartFlow is the only platform that delivers all of this on-premise, in your private cloud, deployed as a Docker or Kubernetes container with zero data egress by default. Multi-architecture support (AMD64 + ARM64) means it runs wherever your infrastructure lives.

To learn more or request an assessment, visit langsmart.ai.

Technical Appendix

Detailed API reference and architecture notes for engineering teams.

A. MCP Gateway API Reference

Semantic Tool Search:

GET /api/mcp/tools/search?q=read+a+file&k=5 — returns ranked tool matches across all servers

POST /api/mcp/tools/reindex — triggers full re-index of all registered servers

OAuth PKCE Flow:

GET /api/mcp/auth/initiate?server_id=...&user_id=... — initiates PKCE flow, returns browser redirect URL

GET /api/mcp/auth/callback?code=...&state=... — OAuth callback, exchanges code for token

Fallback Chains:

GET/POST/DELETE /api/routing/fallback-chains — CRUD for named fallback chain configurations

B. A2A Agent Gateway API Reference

|

Endpoint |

Description |

|

GET /.well-known/agent.json |

Gateway Agent Card listing all active agents |

|

GET

/a2a/{id}/.well-known/agent.json |

Per-agent Agent Card |

|

POST /a2a/{id} (tasks/send) |

Synchronous task execution with full Task response |

|

POST /a2a/{id}

(tasks/sendSubscribe) |

SSE stream of task status

update events |

|

POST /a2a/{id} (tasks/get) |

Retrieve task + history + artifacts by ID |

|

POST /a2a/{id}

(tasks/cancel) |

Cancel in-flight tasks |

|

GET /api/a2a/agents |

List all registered agents |

|

POST /api/a2a/agents |

Register a new agent |

|

DELETE /api/a2a/agents/{id} |

Remove an agent |

|

GET

/api/a2a/agents/{id}/tasks |

Recent task history for an

agent |

C. Cache Architecture

SmartFlow’s 3-tier cache operates as a unified pipeline. On each request, the system checks L1 (in-process memory) first for sub-millisecond response on hot queries, then L2 (Redis with cosine similarity on query embeddings) for semantically equivalent matches, then L3 (Redis exact match on normalized request hash). Cache writes propagate back through all tiers. Adaptive TTL adjusts expiration based on query volatility—frequently changing topics expire sooner, stable queries persist longer.

Per-request cache control headers:

• x-smartflow-cache: skip — bypass cache entirely

• x-smartflow-cache: no-cache — bypass read, write result back to cache

• x-smartflow-cache: no-store — read from cache, do not write result

• x-smartflow-cache-ttl: {seconds} — custom TTL for this request

• x-smartflow-cache-namespace: {name} — scope cache to a named partition

D. Deployment Requirements

SmartFlow 1.3 ships as a multi-architecture Docker image supporting AMD64 and ARM64. Deployment via Docker Compose or Kubernetes Helm chart. Redis is required for caching, virtual key state, fallback chain configuration, MCP server registry, and A2A agent/task persistence. TimescaleDB is used for policy engine state and VAS time-series analytics. MongoDB provides archival storage for full request/response logs. All components deploy within the customer’s private cloud or data center with zero external dependencies and zero data egress by default.